Unlike descriptive and predictive analytics, which focus on understanding past data and predicting future trends, prescriptive analytics provides actionable recommendations on what steps to take next. Below are nine of the best prescriptive analytics tools to help you forecast the business weather and prepare for the storms ahead.

- Alteryx: Best end-user experience

- Azure Machine Learning: Best for data privacy

- SAP Integrated Business Planning: Best for supply chain optimization

- Looker: Best for data modeling

- Tableau: Best for data visualization

- Oracle Autonomous Data Warehouse: Best for scalable data management

- RapidMiner Studio: Best for data mining and aggregation

- IBM Decision Optimization: Best for machine learning

- KNIME: Best data science flexibility on a budget

Product

Score

Best For

Key Differentiator

Pricing

Free Trial/Free Plan

Alteryx

4.46

Best end-user experience

User-friendly interface with drag-and-drop functionality

Starts at $4,950/year

Yes/No

Azure Machine Learning

4.40

Best data privacy

Advanced security features and integration with Azure services

Pay-as-you-go pricing

Yes/No

SAP Integrated Business Planning

4.32

Best for supply chain optimization

Real-time supply chain analytics and planning

Subscription-based pricing

Yes/No

Looker

4.30

Best for data modeling

Strong data modeling capabilities and integration with Google Cloud

Custom pricing

Yes/No

Tableau

4.23

Best for data visualization

Industry-leading data visualization tools

Starts at $75/user/month

Yes/No

Oracle Autonomous Data Warehouse

4.18

Best for scalable data management

Elastic scaling and built-in machine learning capabilities

Based on Oracle Cloud services

Yes/No

RapidMiner Studio

4.18

Best data mining and aggregation

Comprehensive data mining and machine learning tools

Starts at $2,500/year

Yes/Yes

IBM Decision Optimization

4.15

Best machine learning

Powerful optimization solvers and integration with Watson Studio

Starts at $199/user/month

Yes/No

KNIME

4.11

Best data science flexibility on a budget

Open-source platform with extensive data integration capabilities

Free for basic version

Yes/Yes

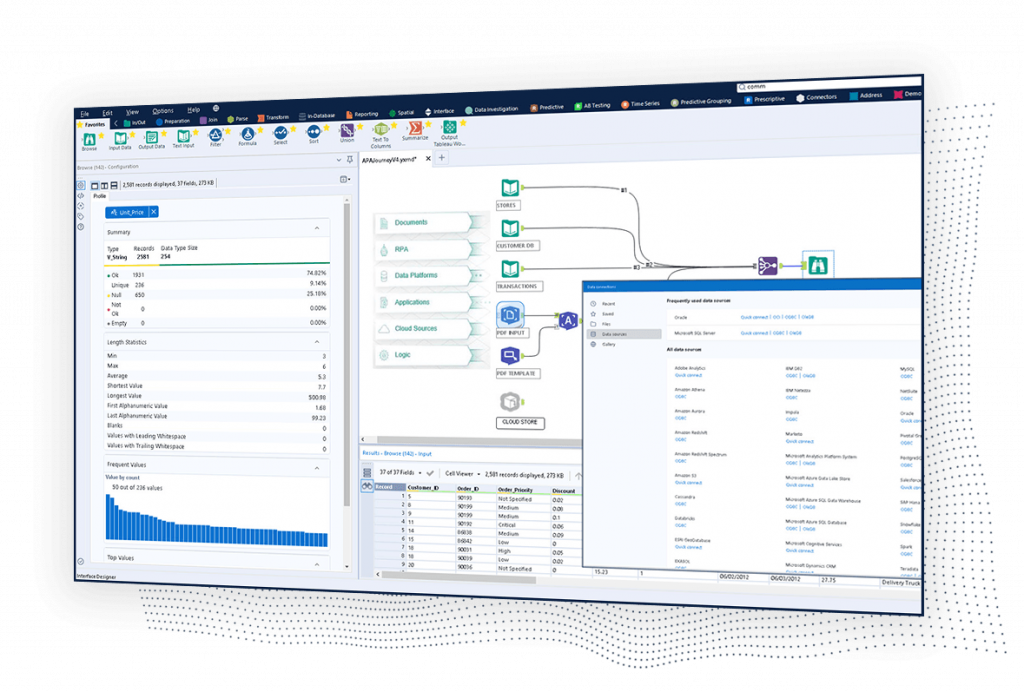

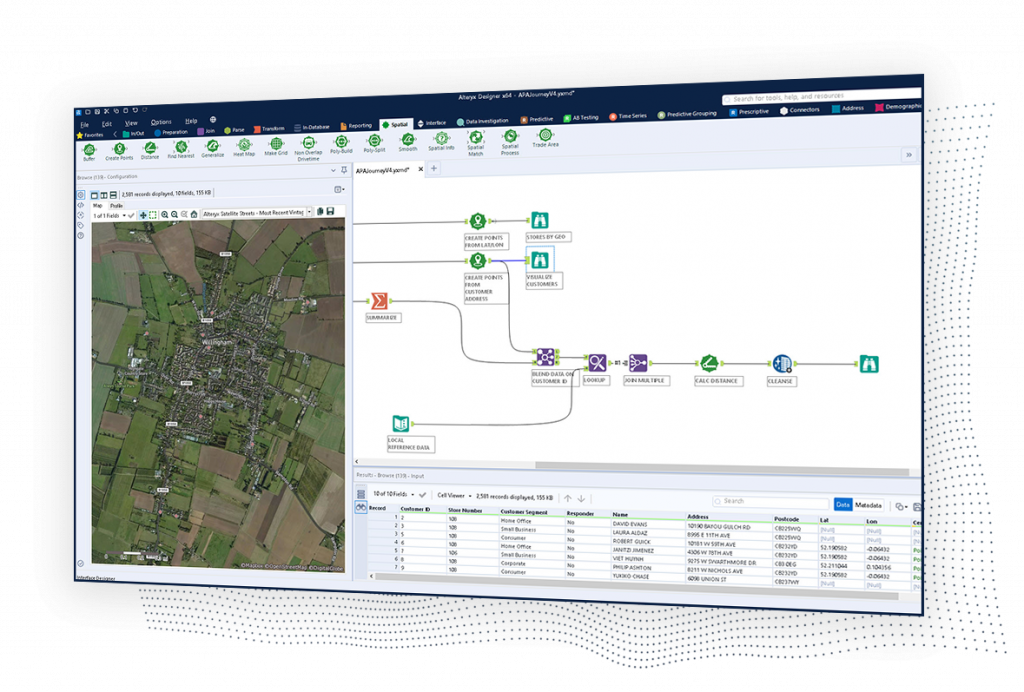

Alteryx: Best for end-user experience

Overall Score

4.46/5

Pricing

2.7/5

General features and interface

4.7/5

Core features

4.8/5

Advanced features

5/5

Integration and compatibility

5/5

UX

4.3/5

Pros

- Intuitive workflow

- Data blending capabilities

- Advanced analytics

- Data visualization

Cons

- Complex for beginners

- Limited collaboration features

- Cost

Why we chose Alteryx

Alteryx’s simple interface helps break down complex data workflows, making data analysis accessible even to non-coders. This feature, coupled with a comprehensive suite of pre-built analytic models and an extensive library of connectors, allows you to derive actionable insights seamlessly. Its Alteryx Academy further enhances its usability and facilitates speedy adoption. The availability of Alteryx Community, a platform for peer support and learning, underlines why it is our top choice for the best end-user experience.

KNIME, another strong contender known for its flexibility and budget-friendly options, still falls short in user experience compared to Alteryx. While KNIME offers powerful data analytics capabilities, its interface can be less intuitive, requiring more technical knowledge to navigate. Alteryx, on the other hand, prioritizes maintaining a user-friendly design, making it easier for users at all technical levels to perform complex analytics tasks without extensive training.

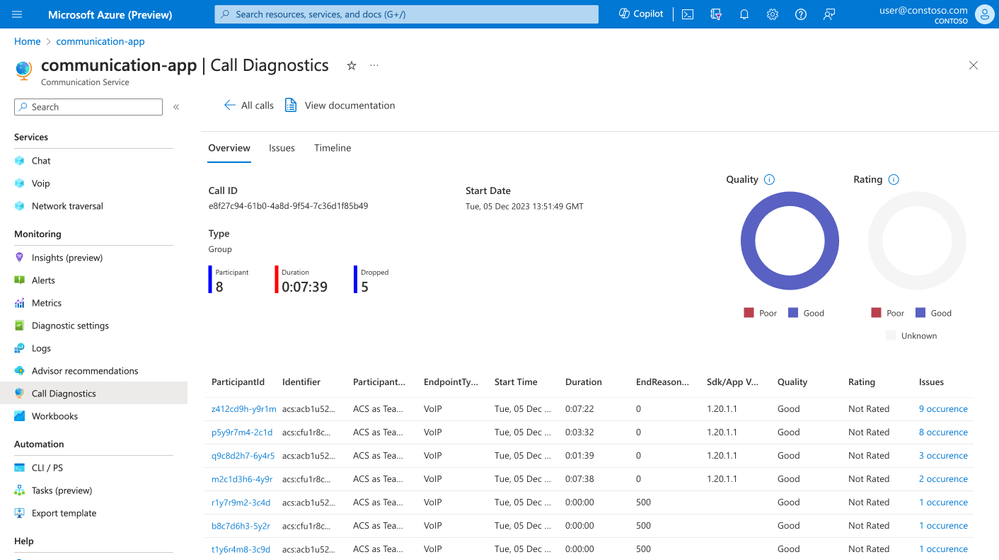

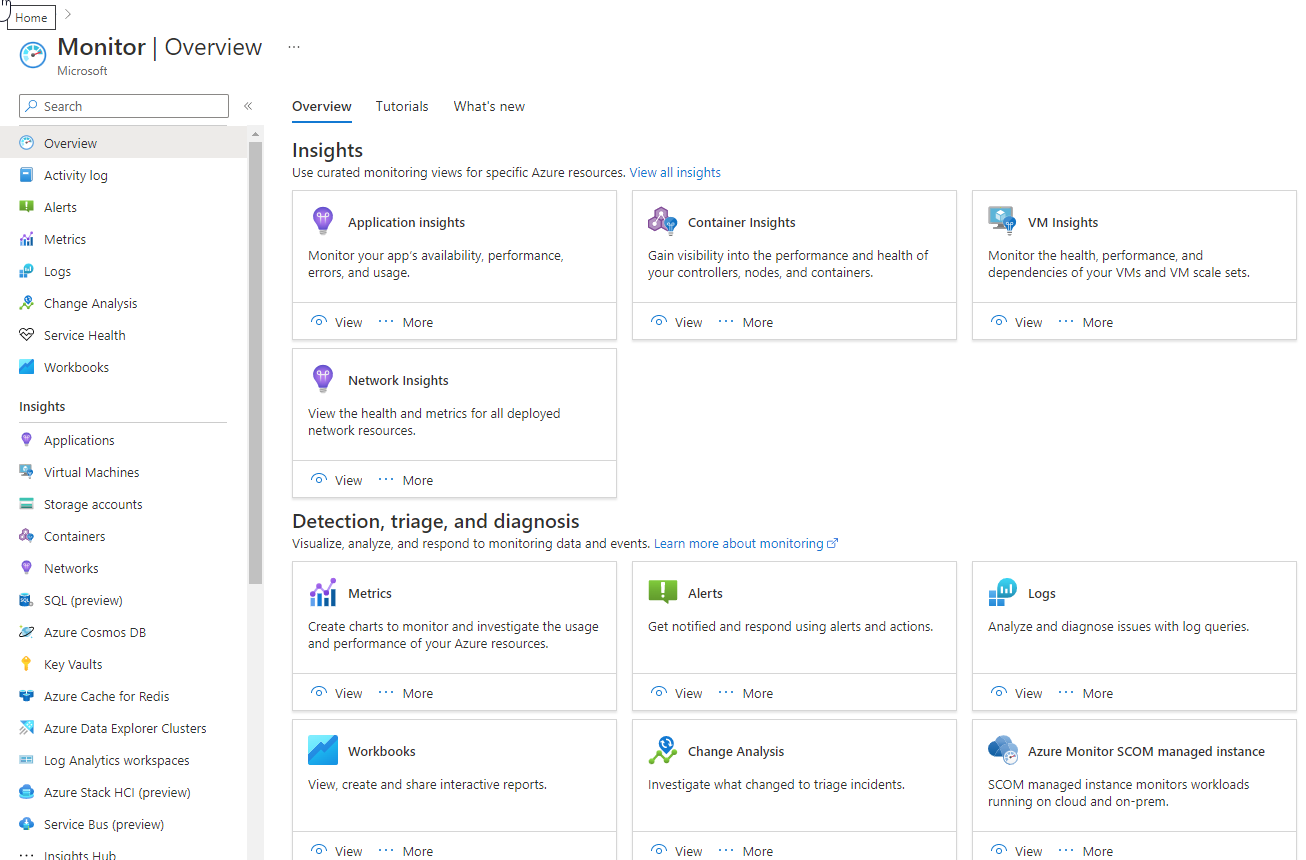

Azure Machine Learning: Best for data privacy

Overall Score

4.40/5

Pricing

2.5/5

General features and interface

4.5/5

Core features

5/5

Advanced features

5/5

Integration and compatibility

5/5

UX

4.5/5

Pros

- Top-notch security

- Built-in privacy features

- Enterprise-level control

Cons

- Dependency on Microsoft ecosystem

- Limitations in free tier

Why we chose Azure Machine Learning

As part of the Azure environment, Azure Machine Learning benefits from all the security features used to protect the cloud service at large. Similar to how Office 365 enables increased controls regarding access privileges, data storage and sharing, and identity management, Azure Machine Learning ensures the safeguarding of connected data pipelines and workflows. Its built-in security measures include advanced threat protection, encryption at rest and in transit, and comprehensive compliance certifications, providing a robust framework for data privacy.

When compared to Oracle Autonomous Data Warehouse, another strong contender known for its security features, Azure Machine Learning stands out particularly in the realm of integrated data privacy. Oracle provides excellent data security and compliance, but Azure’s extensive suite of security tools and seamless integration with other Microsoft services offer a more comprehensive approach to data privacy. Azure’s identity management and access controls, along with its ability to monitor and respond to threats in real-time, give users a higher level of confidence in the protection of their data.

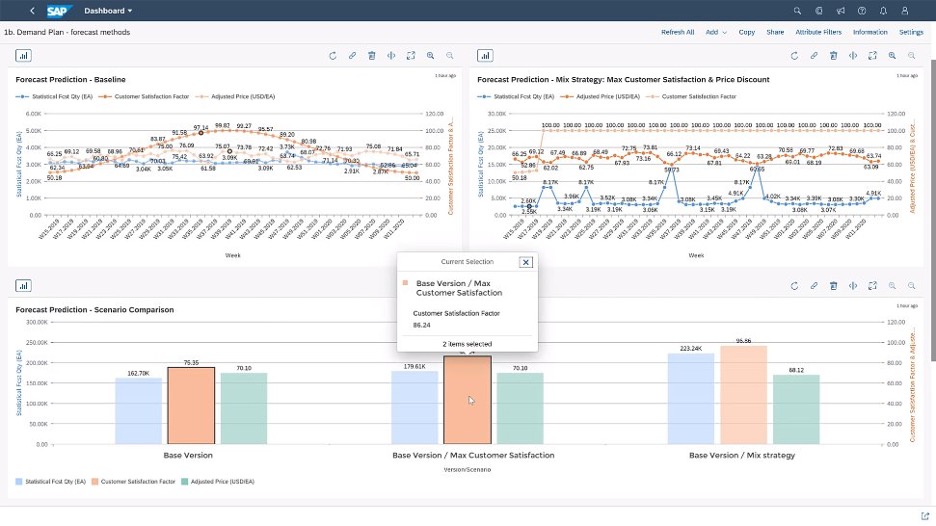

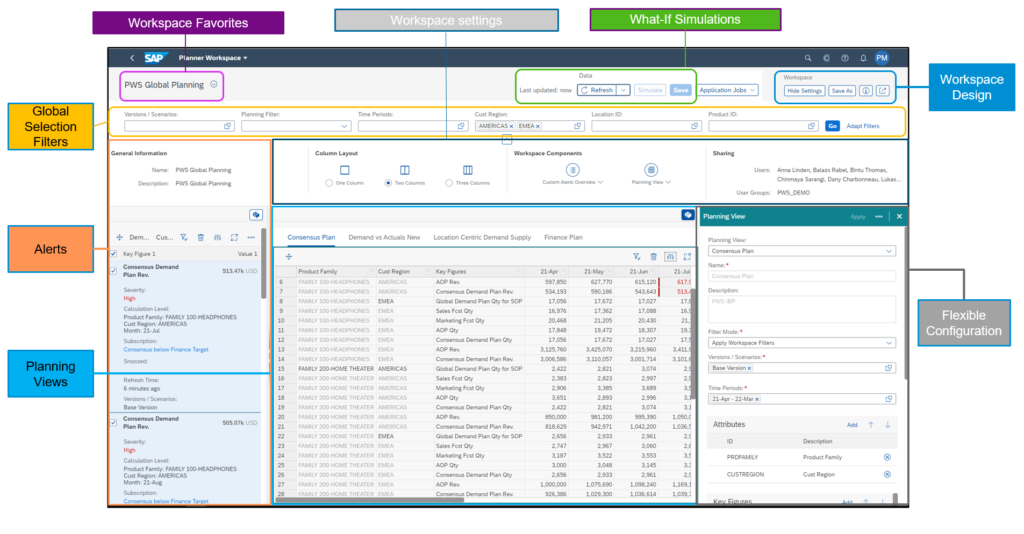

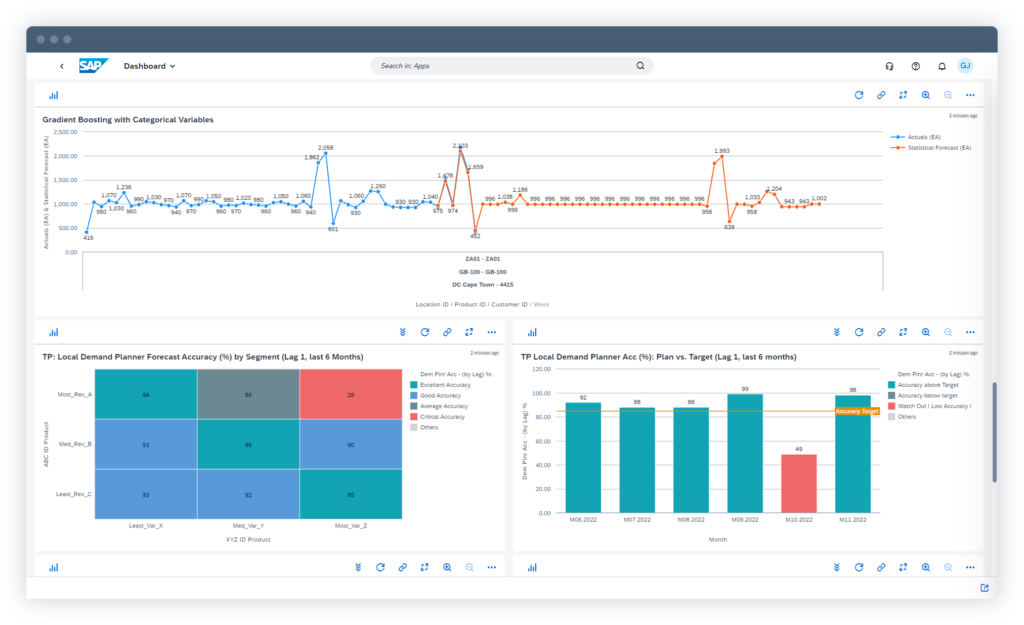

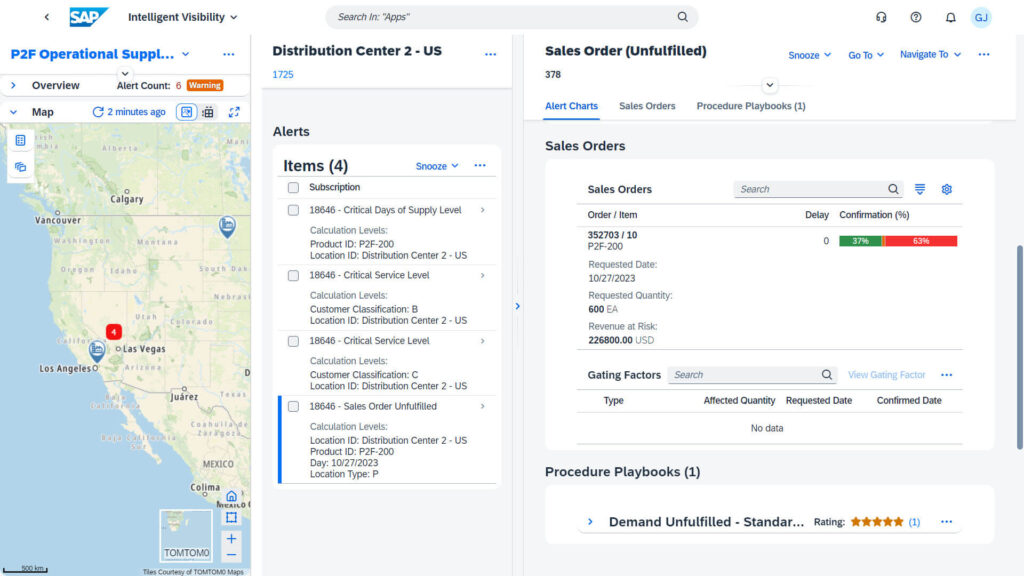

SAP Integrated Business Planning: Best for supply chain optimization

Overall Score

4.32/5

Pricing

2.9/5

General features and interface

4.5/5

Core features

5/5

Advanced features

5/5

Integration and compatibility

4.3/5

UX

4.2/5

Pros

- Immediate insights from live data integration

- Scenario planning

- Short-term demand sensing for accuracy

- Single unified data model

- Supply chain control tower

- Strong ERP integration

Cons

- High implementation cost

- Complex integration

Why we chose SAP Integrated Business Planning

With the full might of SAP’s suite behind it, you can ensure seamless data flow and consistency across business processes. This makes SAP IBP particularly effective for organizations looking to optimize their supply chain operations comprehensively and efficiently.

SAP IBP integrates key planning processes, including demand sensing, inventory optimization, and sales and operations planning, into a single unified platform.

SAP IBP provides end-to-end supply chain visibility and advanced predictive analytics tailored specifically for supply chain management. While Oracle focuses on data management and processing, SAP IBP offers specialized modules for supply chain operations, including demand-driven replenishment and supply chain control tower capabilities, which are not as deeply embedded in Oracle’s offering.

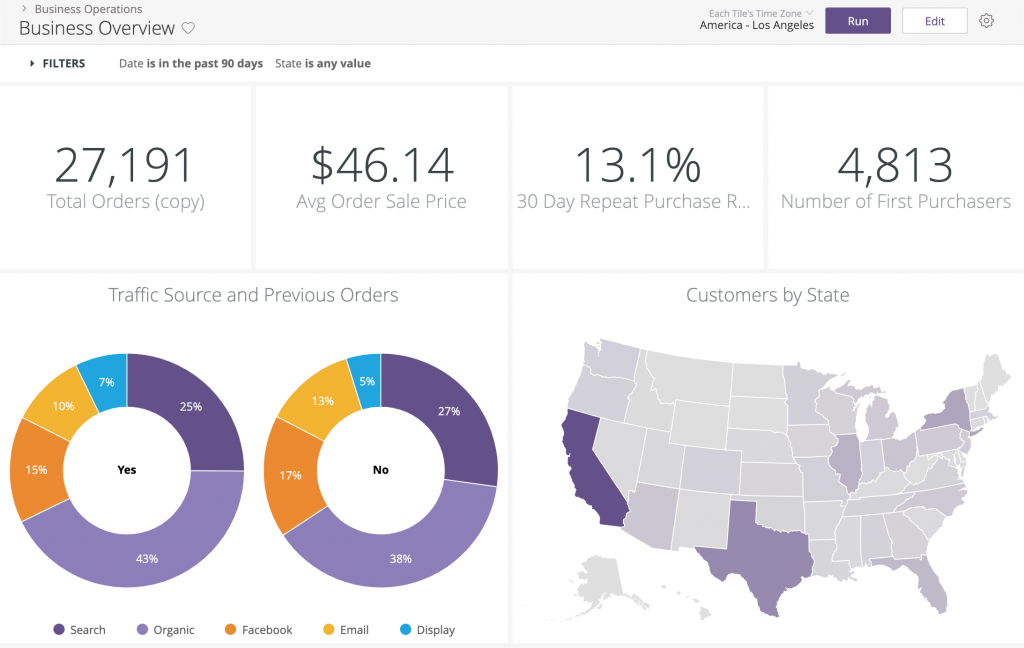

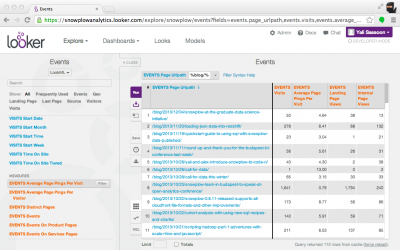

Looker by Google: Best for data modeling

Overall Score

4.30/5

Pricing

3.3/5

General features and interface

3.9/5

Core features

3.5/5

Advanced features

5/5

Integration and compatibility

5/5

UX

3.5/5

Pros

- Built-in IDE for data modeling

- Versatile data access

- Enhanced collaboration

- Integration with R

Cons

- Dependency on LookML

- Limited pre-built visualization types

- Performance scaling issues reported

Why we chose Looker by Google

Looker’s secret weapon is its ability to create powerful, scalable data models using its LookML language. It allows teams to curate and centralize business metrics, fostering better data governance. Plus, its in-database architecture means models can handle large datasets without performance trade-offs. Looker’s versatility and adaptability, including its integration capabilities with SQL and other data sources, make it ideal for businesses that need an intuitive data modeling platform.

The platform’s most natural competitor, Tableau, still leaves something to be desired when it comes to data modeling. Tableau’s strengths lie in its visual analytics, but it falls short in its data modeling capabilities. Looker allows for more sophisticated and reusable data models through LookML, ensuring centralized management and consistency across the organization. Looker’s ability to integrate with SQL databases without data extraction enhances its performance, making it more efficient.

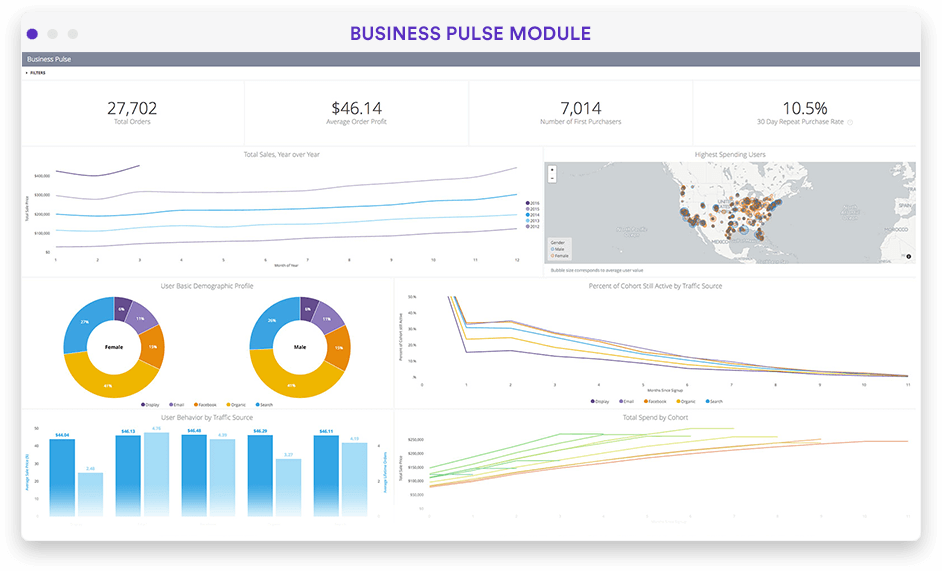

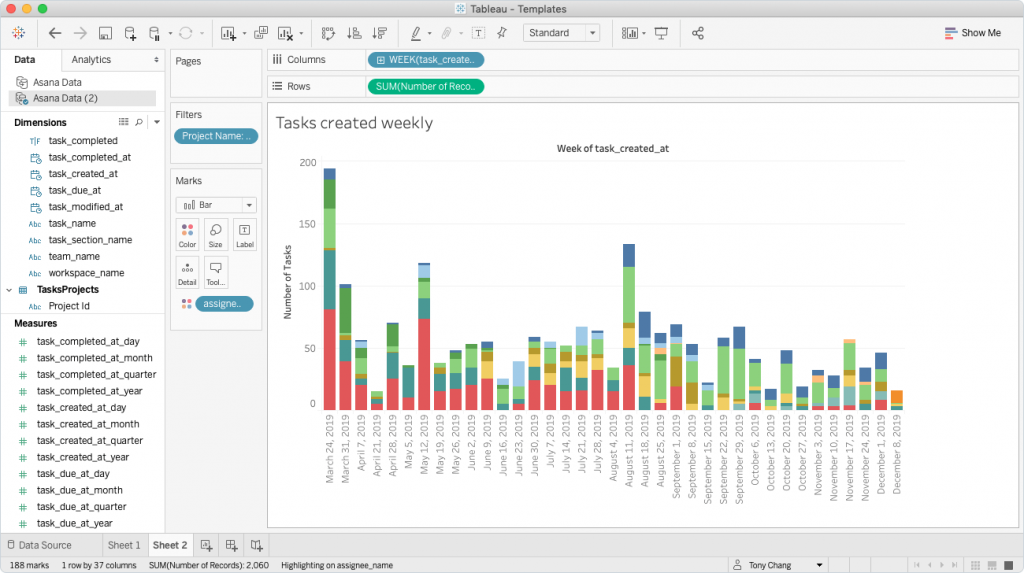

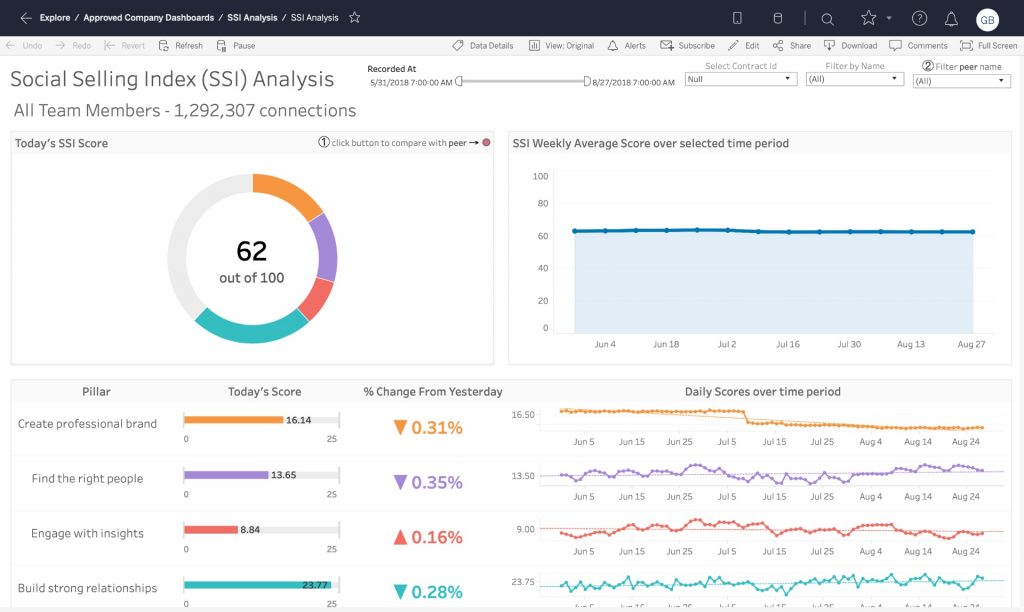

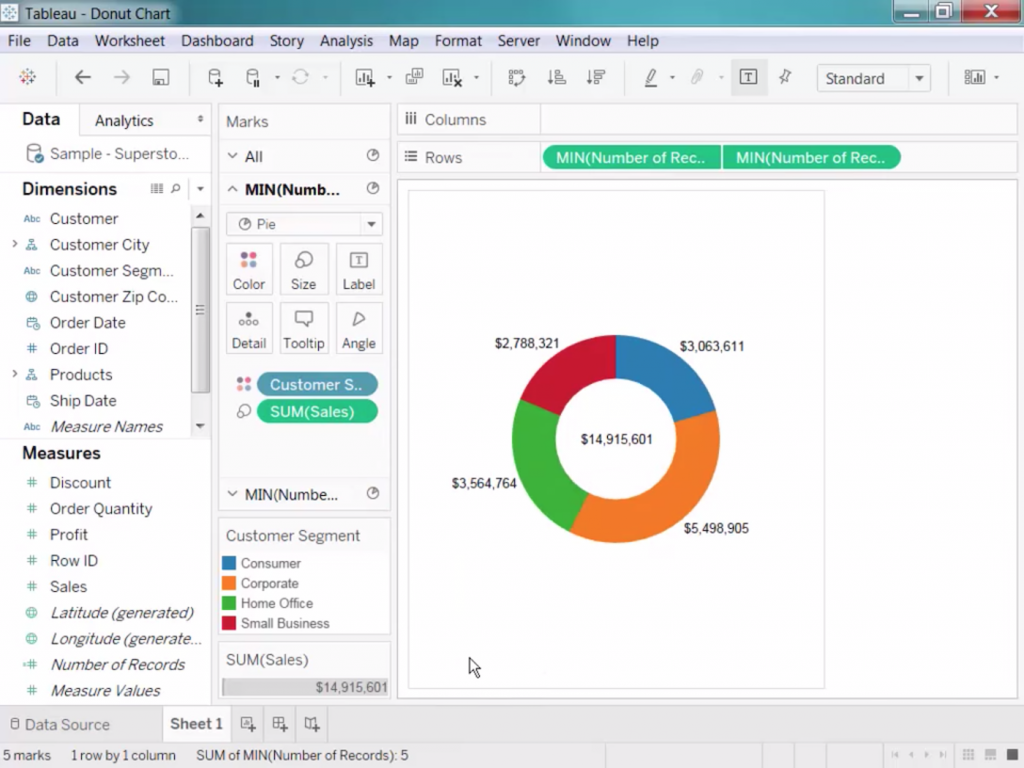

Tableau: Best for data visualization

Overall Score

4.23/5

Pricing

2.1/5

General features and interface

4.3/5

Core features

4.8/5

Advanced features

5/5

Integration and compatibility

5/5

UX

4/5

Pros

- User-friendly interface

- Wide range of visualization options

- Powerful data handling

- Strong community and resources

Cons

- Data connectivity issues

- Limited data preparation

- Costly for large teams

Why we chose Tableau

Tableau is well-known for its ability to turn complex data into comprehensible visual narratives. Its intuitive, drag-and-drop interface makes it accessible for non-technical users while still offering depth for data experts. The large array of visualization options, from simple bar graphs to intricate geographical maps, allows for highly customized presentations of data. With top-notch real-time analytics, mobile-ready dashboards, and secure collaboration tools, Tableau proves to be an invaluable asset for quick, accurate decision-making.

When compared to Microsoft Power BI, another platform known for its data visualization, Tableau excels in providing more sophisticated and customizable visualization options. While Power BI integrates well with other Microsoft products and offers competitive pricing, its visualization capabilities are not as advanced or flexible as Tableau’s. Tableau’s ability to handle large datasets and perform real-time analytics without compromising performance sets it apart. Additionally, its extensive community support and continuous updates ensure that it remains at the forefront of data visualization technology.

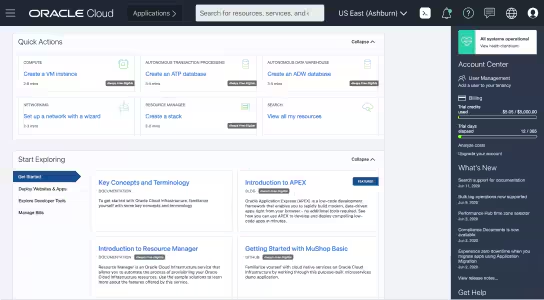

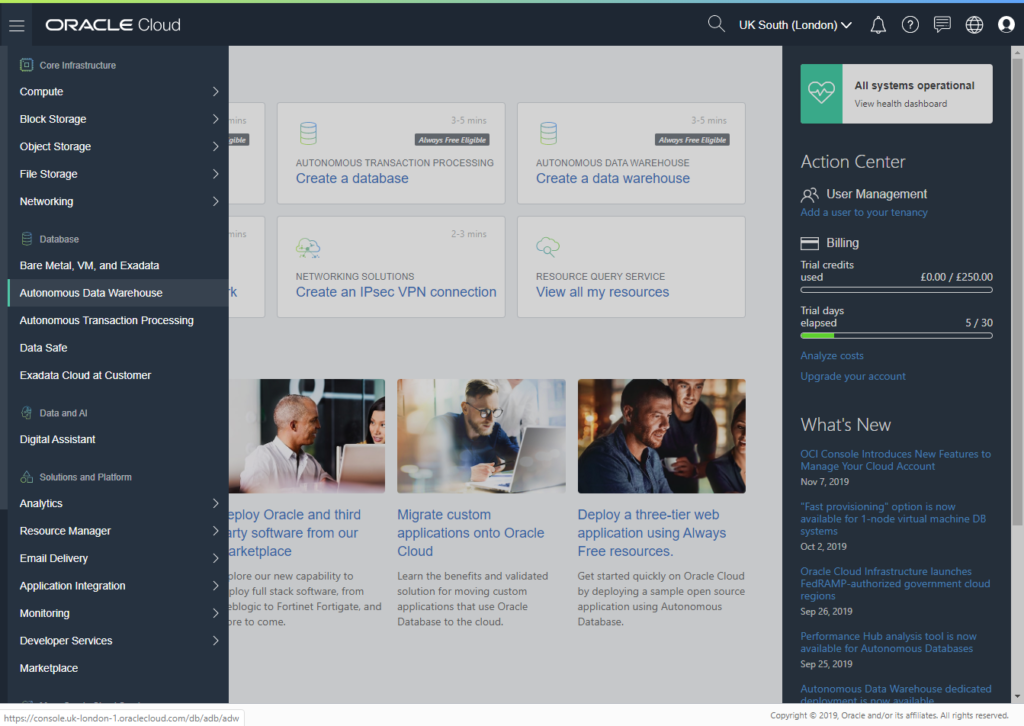

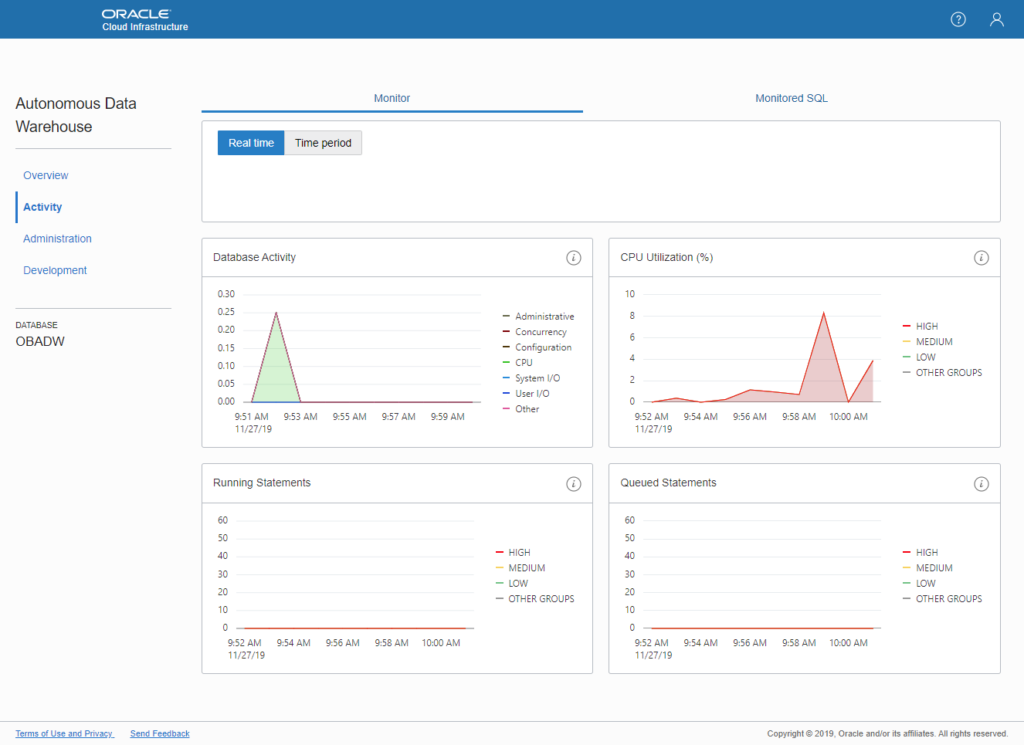

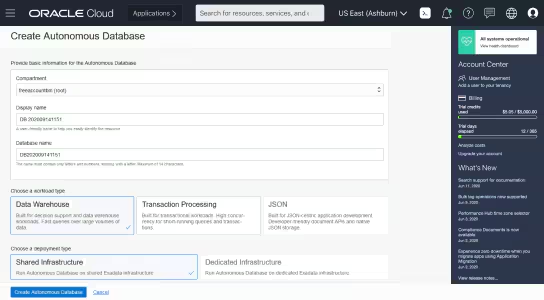

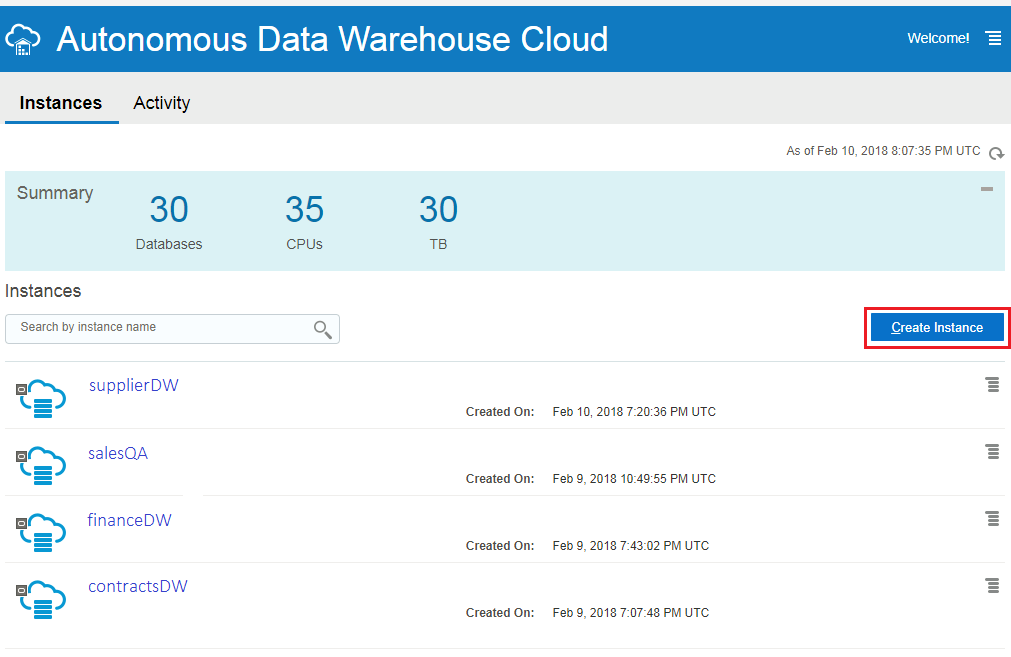

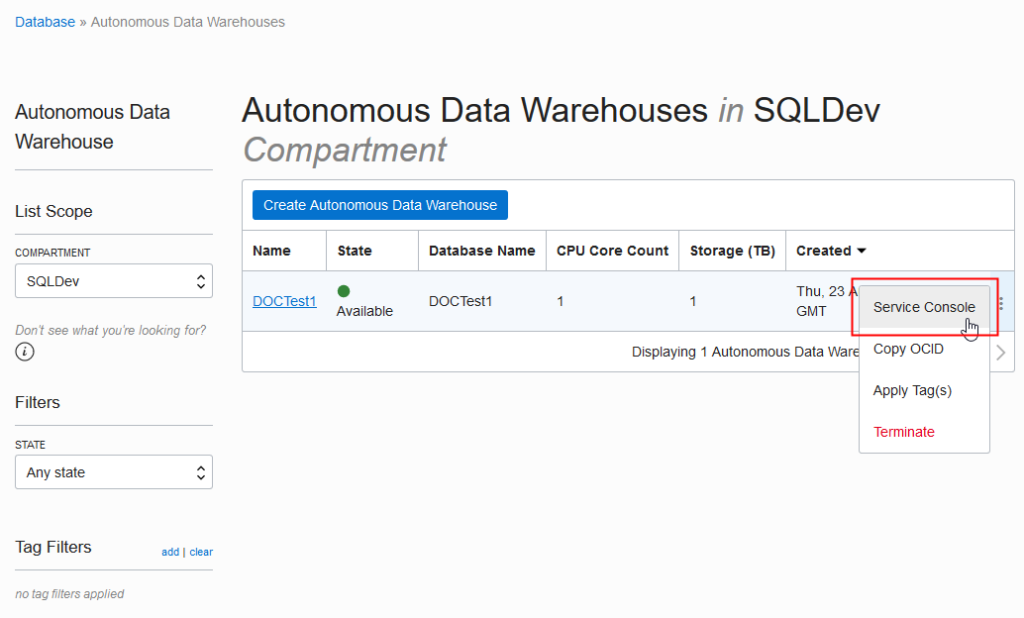

Oracle Autonomous Data Warehouse: Best for scalable data management

Overall Score

4.18/5

Pricing

2.5/5

General features and interface

4.2/5

Core features

5/5

Advanced features

5/5

Integration and compatibility

4/5

UX

4/5

Pros

- Optimized query and workload handling

- Integrates with Oracle services

- Elastic scaling

- Self-managing automation

Cons

- Dependency on Oracle ecosystem

- Complex auto-scaling management

Why we chose Oracle Autonomous Data Warehouse

Oracle Autonomous Data Warehouse is designed to take the heavy lifting out of database operations while delivering impressive performance and adaptability. Imagine a system that grows with your business, automatically adjusting its resources based on your needs.

While IBM brings strong machine learning capabilities to the table, it can’t match Oracle’s seamless scalability and automated management. Oracle goes a step further by baking machine learning right into the system, helping to fine-tune performance, bolster security, and streamline backups.

But it’s not just about handling more data. Oracle’s system plays well with others, integrating smoothly with its cloud ecosystem and a variety of enterprise tools.

Perhaps most impressively, Oracle allows you to perform sophisticated data analysis and predictive modeling right within the warehouse. This in-database machine learning feature is a game-changer for efficiency and insights.

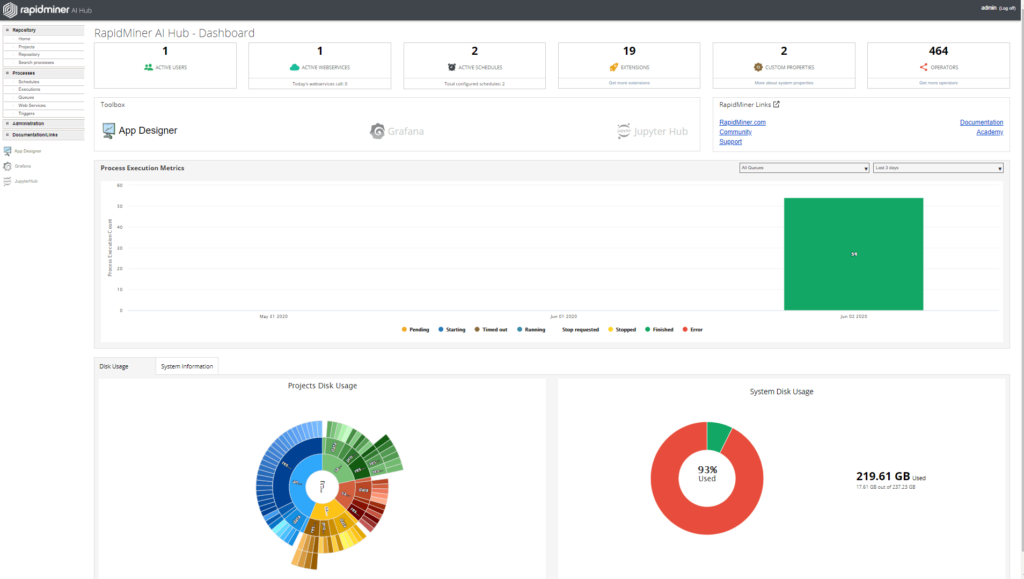

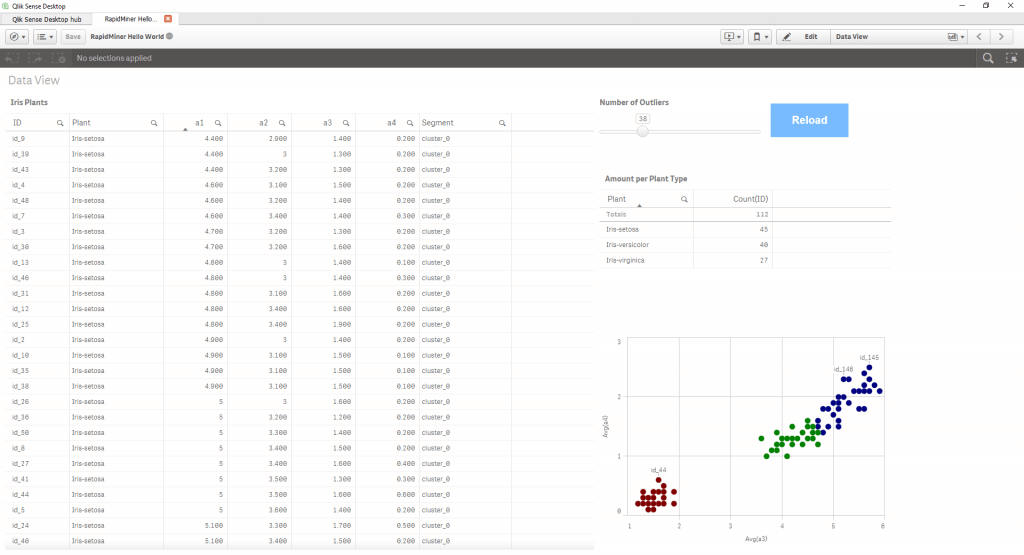

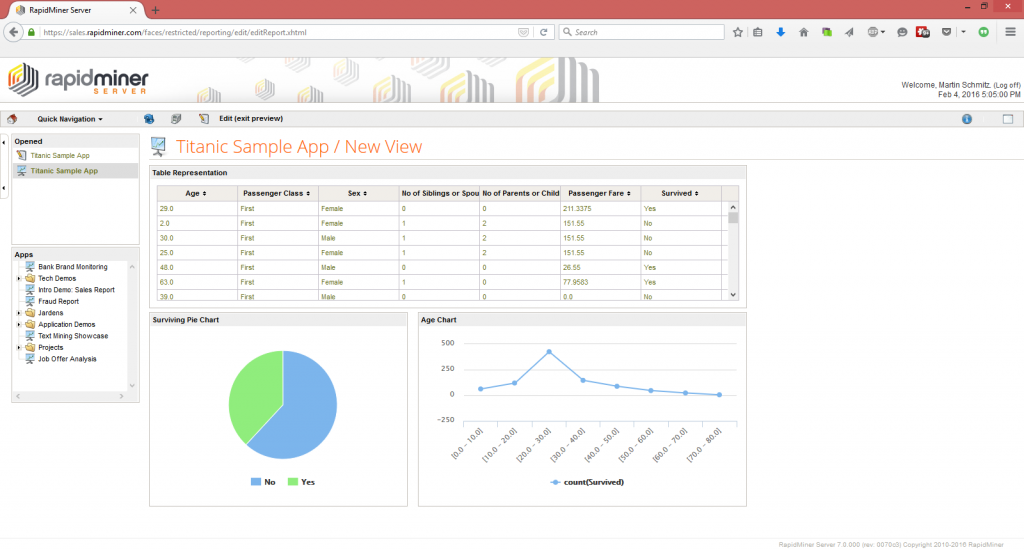

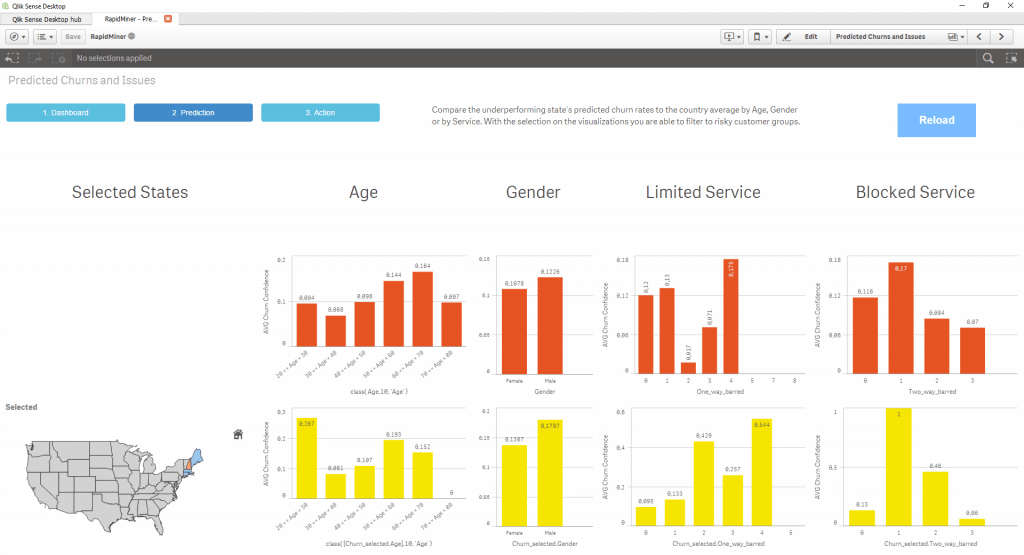

RapidMiner Studio: Best for data mining and aggregation

Overall Score

4.18/5

Pricing

2.5/5

General features and interface

3.9/5

Core features

5/5

Advanced features

4.5/5

Integration and compatibility

5/5

UX

4/5

Pros

- Excellent data processing capabilities

- Model validation mechanisms

- Parallel processing support

Cons

- Scripting limitations

- Memory consumption

- Complex advanced features may be overwhelming for learners

Why we chose RapidMiner Studio

The most compelling attribute of RapidMiner Studio is the level of nuance it provides during data discovery. ETL processes can be defined with numerous granular modifications, making the process of importing and scrubbing data a lot easier. Even messy, unstructured, or poorly organized data can be quickly parsed and processed once the correct automations are in place.

Data almost always has value, but for humans to leverage it meaningfully, it needs to be formatted in a comprehensible way for both users and AI tools. This is RapidMiner’s strong suit: transforming convoluted piles of information into visualizations, dashboards, and prescriptive insights.

KNIME also offers powerful data integration and manipulation capabilities but often requires more manual configuration and coding knowledge. RapidMiner provides a more user-friendly interface and automation features that streamline the ETL process, making it accessible to users with varying levels of technical expertise. Additionally, RapidMiner’s support for handling unstructured data and its ability to produce actionable insights swiftly make it the preferred choice for organizations focused on efficient data mining and aggregation.

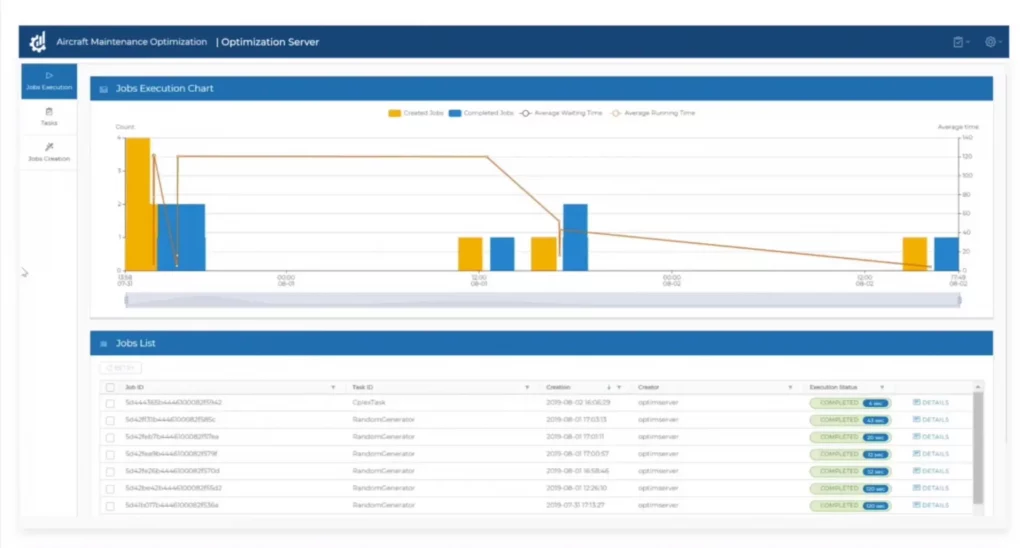

IBM Decision Optimization: Best for machine learning

Overall Score

4.1/5

Pricing

2.1/5

General features and interface

4.5/5

Core features

5/5

Advanced features

5/5

Integration and compatibility

4.4/5

UX

3.8/5

Pros

- Advanced optimization algorithms

- Integration with machine learning

- Scalability

- Customizable models

Cons

- Limited documentation

- Inflexible licensing

- Requires expertise

Why we chose IBM Decision Optimization

IBM has been a major player in computer technologies for decades, having transitioned from producing hardware to developing cutting-edge machine learning systems. Their expertise in this area has placed them at the forefront of business intelligence and prescriptive analytics. While IBM Watson often receives the most attention, IBM Decision Optimization is an equally impressive suite of BI tools that enable large-scale enterprises to transform their operational data into powerful optimization solutions. It is part of IBM’s extensive suite of business intelligence tools.

Alteryx is a very similar competitor, also offering strong data preparation and predictive analytics but lacking the sophisticated optimization capabilities that IBM provides. A key differentiator is IBM’s use of CPLEX solvers, which allow for complex, large-scale optimization problems to be solved efficiently—a feature Alteryx does not offer.

IBM also has the advantage of offering seamless integration with Watson Studio. This gives you direct utilization of machine learning models within optimization workflows, providing a streamlined, high-performance solution for real-time data processing and scenario planning. Alteryx, while strong in its domain, requires more manual effort to combine predictive and prescriptive analytics, limiting its efficiency in handling complex optimization scenarios.

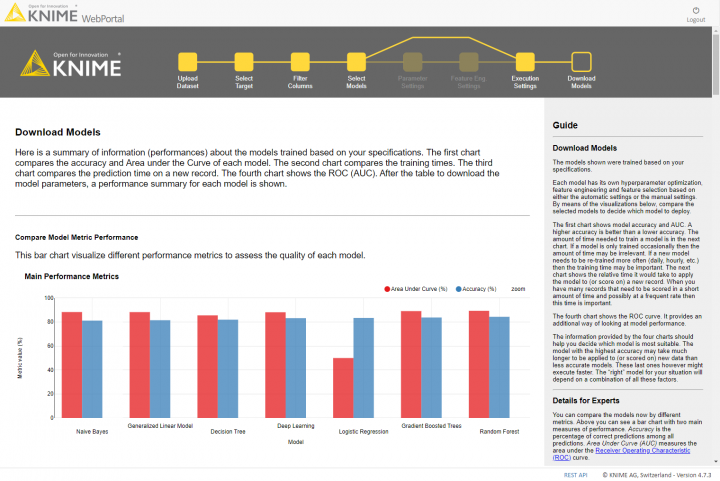

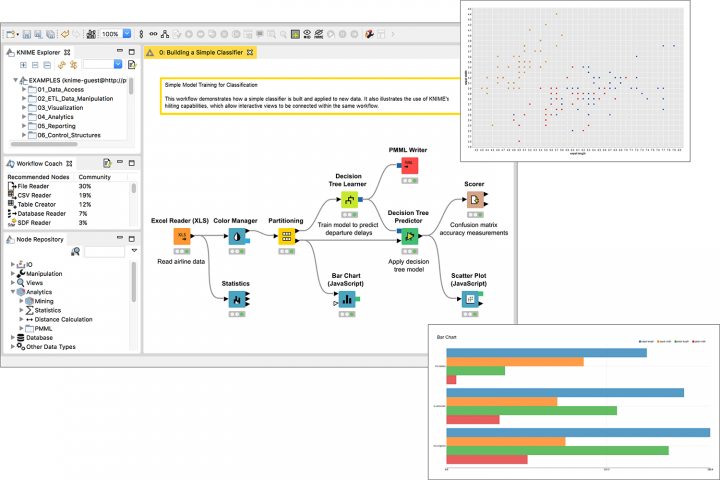

KNIME: Best for data science flexibility on a budget

Overall Score

4.11/5

Pricing

3.3/5

General features and interface

3.9/5

Core features

3.5/5

Advanced features

5/5

Integration and compatibility

5/5

UX

3.5/5

Pros

- Open source

- Extensive integration options

- Extensive analytics capabilities

- Strong community support

Cons

- Resource-intensive workflows

- Limited in-built visualizations

- Complex deployment

Why we chose KNIME

While KNIME lacks the sleek, push-button UIs that most other BI tools present, this isn’t necessarily a drawback, depending on the use case. For those in need of high levels of customization and the ability to shape the models and learning algorithms to their data pipelines, workflows, and native environments, KNIME has a lot to offer.

Additionally, KNIME is free to use for individual users, and its more DIY structure facilitates lower costs than other solutions when adding to the user base. KNIME’s “data sandbox” is perfect for data teams that want to supercharge their efforts but don’t need to offer widespread end-user access to the tools themselves.

When compared to RapidMiner Studio, another competitor known for its strong data mining and aggregation capabilities, KNIME wins in the categories of flexibility and cost-effectiveness. RapidMiner offers a more guided experience with its automation features, but this comes at a higher price point and with less customization. KNIME, in contrast, provides a more open environment where data scientists can build highly tailored workflows without being constrained by pre-built processes.

Prescriptive analytics

A quick breakdown of the four common functions of business intelligence:

| Descriptive Analytics | The “What” | Used to organize data, parse it, and visualize it to identify trends. |

| Diagnostic Analytics | The “Why” | Used to analyze trends, examine their progress over time, and establish causality. |

| Predictive Analytics | The “When” | Used to compile trend and causality data, and extrapolate upcoming changes to anticipate outcomes. |

| Prescriptive Analytics | The “How” | Used to predict possible scenarios, test possible strategies for ROI or loss potential, and recommend actions. |

Prescriptive analytics is among the most advanced business applications for machine learning and data science. It requires a significant amount of AI processing and depends on large volumes of reliable data. More importantly, like a human employee, it can be trained to respond to inputs and scenarios over time, improving the recommendations it outputs.

Recent studies, such as this one published in the International Journal of Management Information Systems and Data Science, highlight the transformative impact of integrating machine learning with prescriptive analytics to enhance business decision-making processes and competitive advantage.

For a deeper dive on prescriptive analytics and where it fits into the data analysis ecosystem, check out this article on data analysis software.

Read more: What is Diagnostic Analytics?

“Always tell me the odds”: Why prescriptive analytics matter

Prescriptive analytics isn’t a crystal ball. What it is might be closer in analogy to an independent consultant or a military tactician. It surveys the battlefield and considers numerous scenarios based on likelihood, parameters and circumstantial constraints, intensity of effects on final outcomes, and the options or resources available to the organization.

Then, after simulating the possibilities and comparing current plans to potential alternatives, it makes recommendations to promote the most positive results.

In short, it doesn’t remove the uncertainty from business planning; it reduces the level of disruption caused by unanticipated events or a lack of forethought.

Forecasting outcomes like this can be used to achieve a number of important business goals:

- Preventing or mitigating loss

- Minimizing or avoiding risk factors

- Optimizing processes, schedules, and routes

- Improving resource utilization and limiting downtime

- Anticipating opportunities

With prescriptive analytics, businesses can work proactively, instead of reactively. It’s reassurance and validation when things go according to plan, and it’s a safety net when things take a turn for the catastrophic. Either way, you’ve explored the possibilities via numerous scenarios and simulations, and you’re as prepared as possible for what the future brings.

Choosing the best prescriptive analytics software

Remember, “crazy prepared” is only a negative until everyone needs what you’ve prepared in advance. Hopefully, this list of prescriptive analytics tools will help you find the solution that positions your business as the Batman of your industry. If not, check out our in-depth embedded analytics guide for more insight on how to choose a provider for your use case.

Looking for the latest in Business Intelligence solutions? Check out our Business Intelligence Software Buyer’s Guide.